The Operating Room (OR) is a high-tech environment that strongly relies on electronic devices, information systems and video equipment, all of which exchange, process or display signals containing key data about the underlying surgical process. In spite of their potential utility in contributing to the development of intelligent applications for the OR, currently these signals are being used solely for the immediate performance of the surgery. The fundamental idea behind our research is the fact that automatically recording, processing and analyzing the large amount of multi-modal surgical signals available in the OR, an endeavor largely unattempted so far, can lead to new assistance and decision support tools for surgeons and surgical staff.

Consequently, the research group on Computational Modeling and Analysis of Medical Activities aims at developing a multi-sensor data acquisition system for the operating room (OR) as well as devising novel methods to perceive, model, analyze and assist surgical activities. These methods will be used to design a new generation of context-aware assistance systems for the OR that can help the surgical team with performing routine tasks and also enable them to better process and visualize the large amount of information generated during a surgical procedure. Our research lies at the intersection of computer vision, medical robotics, augmented reality and machine learning. We seek in particular to leverage the large amount of data collected from medical devices and sensors used in a wide variety of surgical procedures to develop new algorithms and solutions for modeling, recognition and assistance of clinical activities.

A first application of our research is the monitoring of radiation during image-guided interventions to address the increased exposure of surgical staff to X-rays generated by imaging devices.

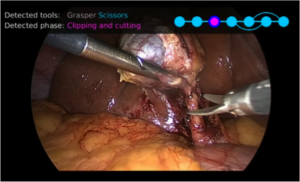

A second application is the development of tools to automatically process endoscopic surgeries for real-time safety monitoring, video database indexing and surgical education.

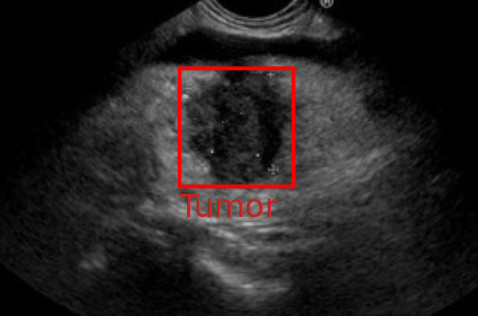

A third application is the design of enhanced methods for navigation and image analysis to support challenging endoscopic ultrasound procedures.